What is Constitutional AI?

by Stephen M. Walker II, Co-Founder / CEO

What is Constitutional AI?

AI research lab Anthropic developed new RLAIF techniques for Constitutional AI that help align AI with human values. They use self-supervision and adversarial training to teach AI to behave according to certain principles or a "constitution" without needing explicit human labeling or oversight.

Constitutional AI aims to embed legal and ethical frameworks into the model, like those in national constitutions. The goal is to align AI systems with societal values, rights, and privileges, making them ethically aligned and legally compliant.

What is the background of Constitutional AI?

Constitutional AI integrates legal and ethical frameworks, particularly those principles found in national constitutions or other foundational legal documents. The objective is to ensure that AI operations adhere to societal values, rights, and privileges, thereby crafting systems that are ethically aligned and legally compliant.

Anthropic, an AI research company, has developed a set of techniques under the umbrella of Constitutional AI to align AI systems with human values, aiming to make them helpful, harmless, and honest. This involves using self-supervision and adversarial training to teach AI to behave according to a set of principles or a "constitution" without the need for explicit human labeling or oversight.

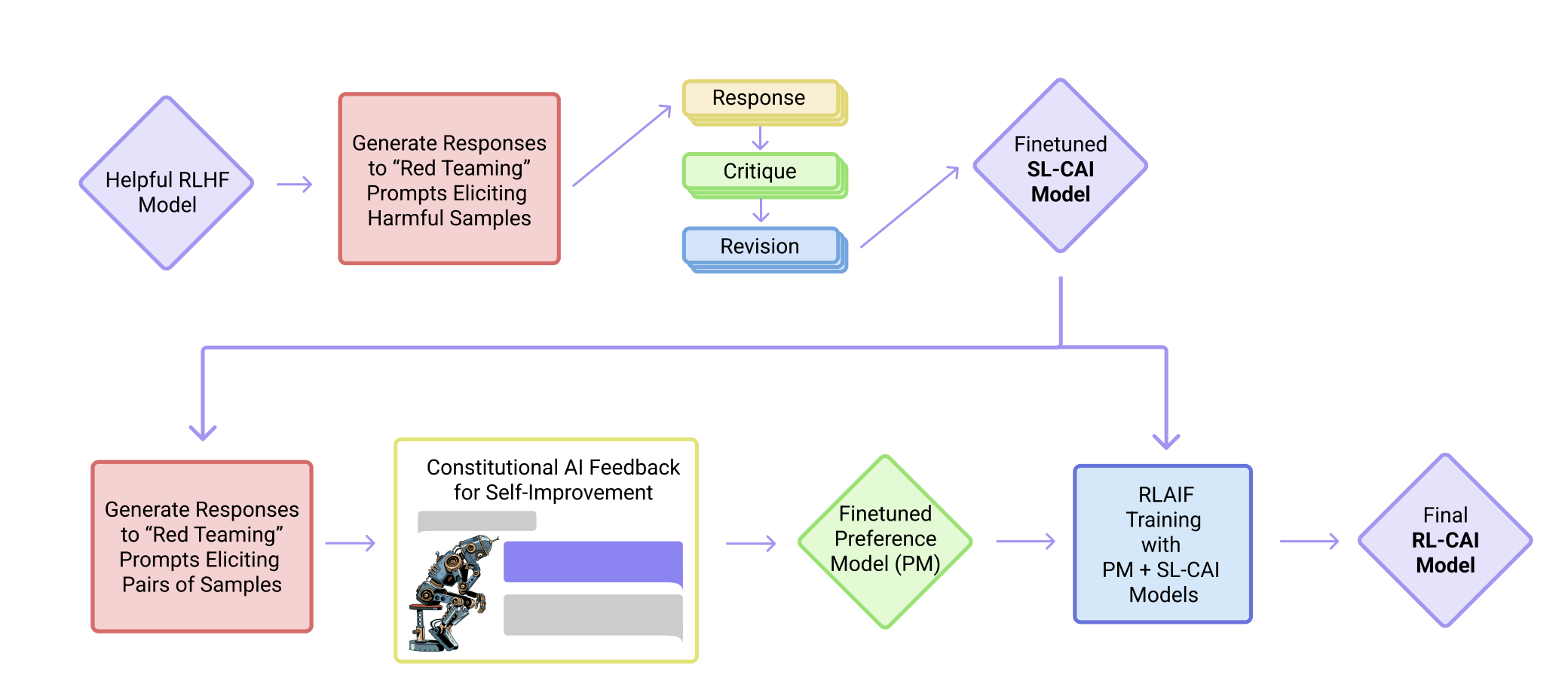

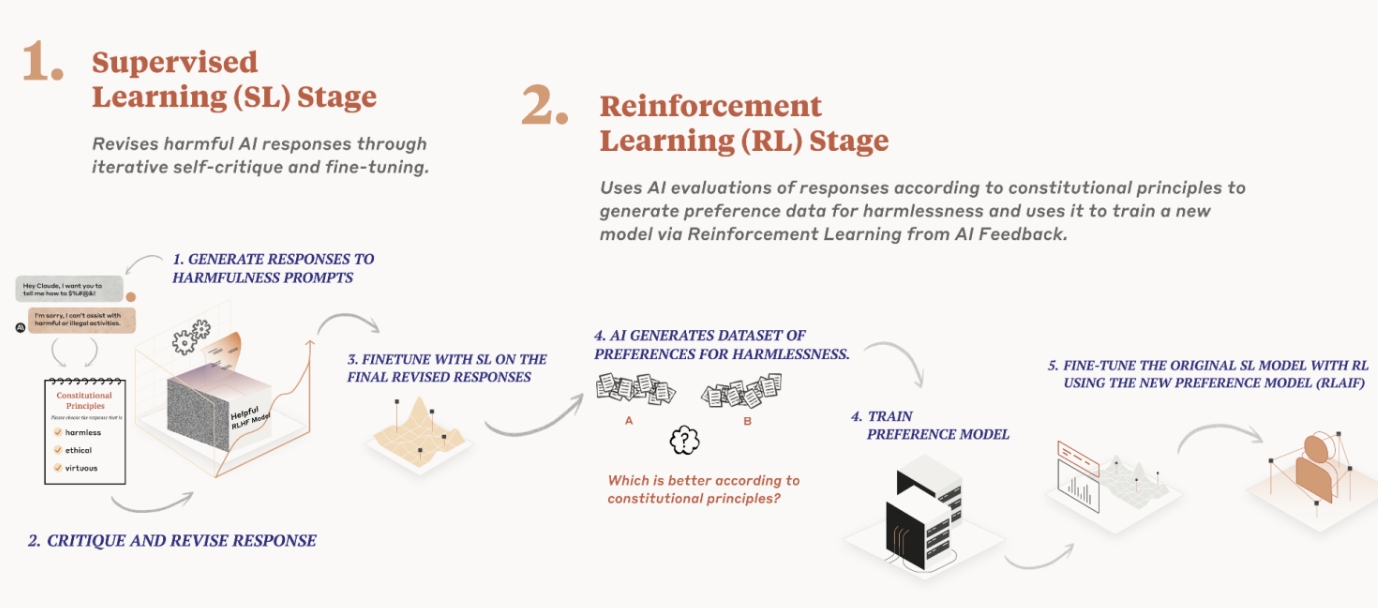

The process of creating a Constitutional AI involves two main stages: the Reflection stage and the Reinforcement stage. In the Reflection stage, the AI generates responses, self-critiques, and revisions, which are then used to fine-tune the model. The Reinforcement stage involves training a reward model based on AI preferences and using reinforcement learning to encourage behavior that aligns with the defined constitution.

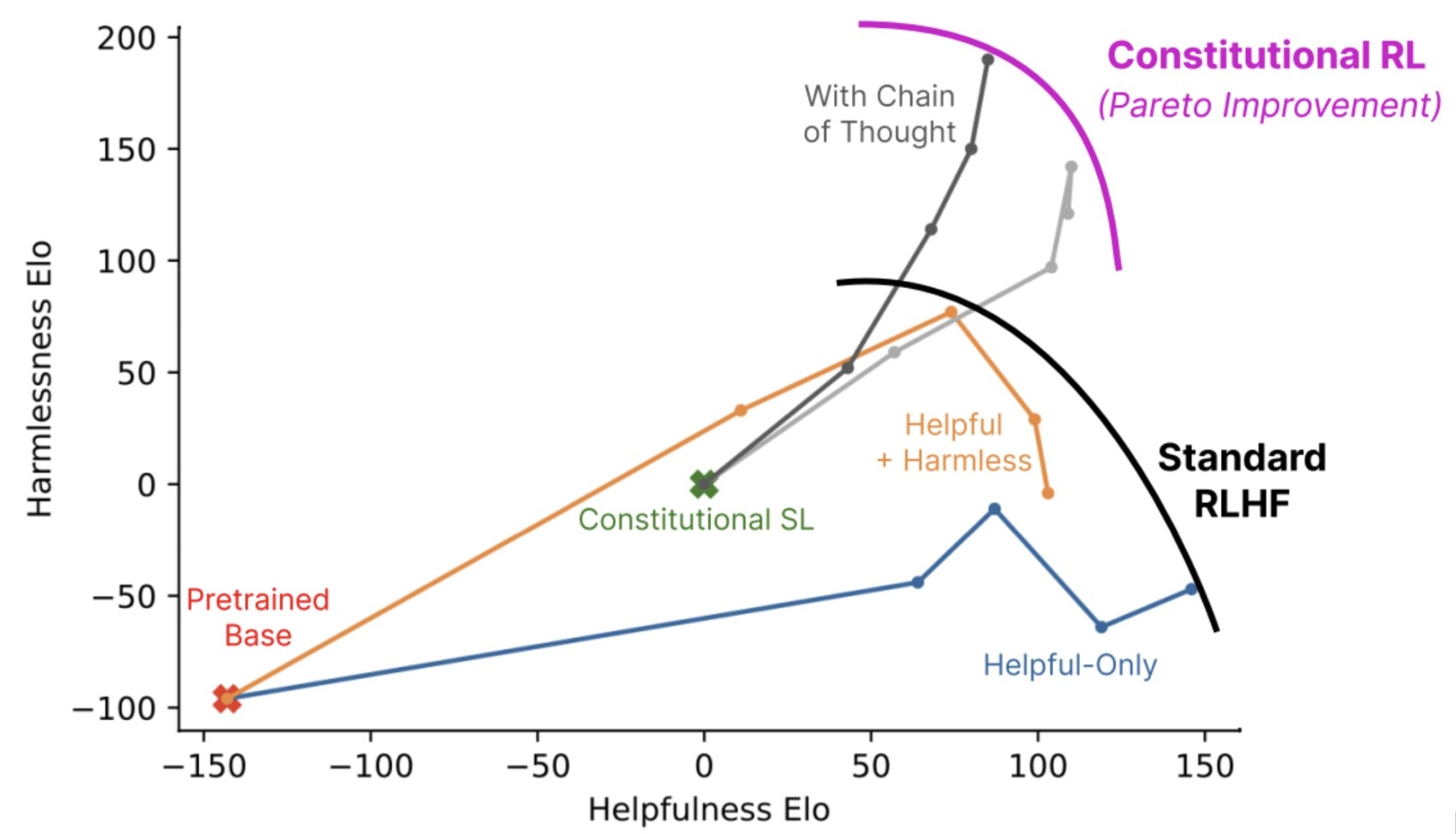

Constitutional AI is seen as a promising approach to imbue AI systems with values and make their behavior more predictable and transparent. It allows for more precise control of AI behavior with fewer human labels and can potentially improve the transparency of AI decision-making.

Anthropic's implementation of Constitutional AI, particularly with their model Claude, is an example of how AI can be trained to follow a set of principles, which can be as diverse as the UN Declaration of Human Rights or a company's Terms of Service. The approach is designed to be adaptable to different "constitutions," which may sometimes have conflicting principles.

Constitutional AI is a method for creating AI systems that are ethically aligned and legally compliant, with the potential to be transparent, accountable, and aligned with human values.

What are some examples of Constitutional AI in practice?

Anthropic's implementation of Constitutional AI, particularly with their model Claude, is an example of how AI can be trained to follow a set of principles, which can be as diverse as the UN Declaration of Human Rights or a company's Terms of Service.

The approach is designed to be adaptable to different "constitutions," which may sometimes have conflicting principles.

How does Constitutional AI differ from other AI techniques?

Constitutional AI differs from other AI techniques in that it focuses on integrating legal and ethical frameworks into the model, making the system ethically aligned and legally compliant.

Unlike traditional reinforcement learning, which relies heavily on human labeling or oversight, Constitutional AI uses self-supervision and adversarial training to teach AI to behave according to a set of principles or a "constitution."

This approach allows for more precise control of AI behavior with fewer human labels and can potentially improve the transparency of AI decision-making.

What are the benefits of using Constitutional AI?

- Ethical Alignment — By integrating legal and ethical frameworks into the model, Constitutional AI ensures that AI systems adhere to societal values, rights, and privileges, making them ethically aligned.

- Legal Compliance — The approach helps create systems that are legally compliant, reducing the risk of unintended consequences or violations of laws and regulations.

- Transparency — Constitutional AI can potentially improve the transparency of AI decision-making by providing a clear set of principles or "constitution" that guides the system's behavior.

- Accountability — By making AI systems more transparent and ethically aligned, Constitutional AI can help promote accountability in AI decision-making.

- Adaptability — The approach is designed to be adaptable to different "constitutions," allowing for flexibility in defining the principles that guide AI behavior.

- Reduced Human Labeling — Unlike traditional reinforcement learning, Constitutional AI uses self-supervision and adversarial training, reducing the need for explicit human labeling or oversight.

- Improved Predictability — By integrating legal and ethical frameworks into the model, Constitutional AI can help make AI behavior more predictable and transparent.

How is Constitutional AI different than RLHF and RLAIF?

Constitutional AI differs from Reinforcement Learning with Human Feedback (RLHF) and Reinforcement Learning from AI Feedback (RLAIF) in several ways:

- Integration of Legal and Ethical Frameworks — Constitutional AI focuses on integrating legal and ethical frameworks into the model, making the system ethically aligned and legally compliant. In contrast, RLHF and RLAIF primarily focus on improving the quality of AI responses through human or AI feedback, without necessarily considering legal and ethical implications.

- Self-Supervision and Adversarial Training — Constitutional AI uses self-supervision and adversarial training to teach AI to behave according to a set of principles or a "constitution." This approach allows for more precise control of AI behavior with fewer human labels, potentially improving the transparency of AI decision-making. In contrast, RLHF and RLAIF rely heavily on human labeling or oversight, which can be time-consuming and resource-intensive.

- Adaptability to Different "Constitutions" — Constitutional AI is designed to be adaptable to different "constitutions," allowing for flexibility in defining the principles that guide AI behavior. This makes it suitable for a wide range of applications, where legal and ethical considerations may vary. In contrast, RLHF and RLAIF are more focused on improving the quality of AI responses, without necessarily considering adaptability to different contexts or "constitutions."

- Reduced Human Labeling — Constitutional AI reduces the need for explicit human labeling or oversight, as it uses self-supervision and adversarial training to teach AI to behave according to a set of principles or a "constitution." In contrast, RLHF and RLAIF rely heavily on human labeling or oversight, which can be time-consuming and resource-intensive.

Constitutional AI is more focused on ensuring that AI systems adhere to legal and ethical frameworks, while also improving the transparency of AI decision-making through self-supervision and adversarial training.

In contrast, RLHF and RLAIF are primarily concerned with improving the quality of AI responses through human or AI feedback, without necessarily considering legal and ethical implications or adaptability to different contexts or "constitutions."

FAQs

How does Constitutional AI ensure ethical alignment in AI systems?

Constitutional AI ensures ethical alignment in AI systems by integrating legal and ethical frameworks into the model, making it adhere to societal values, rights, and privileges. This approach helps create systems that are ethically aligned and legally compliant, reducing the risk of unintended consequences or violations of laws and regulations.

What is the role of self-supervision and adversarial training in Constitutional AI?

Self-supervision and adversarial training play a crucial role in Constitutional AI by teaching AI to behave according to a set of principles or a "constitution" without needing explicit human labeling or oversight. This approach allows for more precise control of AI behavior with fewer human labels, potentially improving the transparency of AI decision-making.

How can Constitutional AI improve the transparency of AI decision-making?

Constitutional AI can improve the transparency of AI decision-making by providing a clear set of principles or "constitution" that guides the system's behavior. This makes it easier for users to understand how the AI system is making decisions and ensures that the system adheres to legal and ethical frameworks, promoting accountability in AI decision-making. Additionally, the use of self-supervision and adversarial training can help reduce the need for explicit human labeling or oversight, further improving the transparency of AI decision-making.