Analyzing Retool's State of AI 2023 Report

by Stephen M. Walker II, (Co-Founder / CEO)

Retool recently conducted an insightful survey of over 1,500 tech professionals to uncover sentiment and adoption trends around AI in 2023. In this post, I analyze key findings from Retool's survey covering current usage, tooling preferences, and outlook on topics from governance to jobs. Read on for my synthesis of Retool's data and insights into how companies are leveraging generative AI tools today and expectations as the landscape continues evolving.

Methodology

The majority of responses come from emerging small business with under 100 employees, however, we are able to find key insights from over 1,500 tech professionals that indicate a trend towards AI-powered features, dominance of OpenAI's foundation models, concerns over data security and model accuracy, and a growing emphasis on ethical AI governance.

| Attribute | Details |

|---|---|

| Survey Date | August 2023 |

| Number of Respondents | 1,578 |

| Top 5 Industries | 1. Technology (39%) 2. Consulting/Professional Services (12%) 3. Financial Services (10%) 4. Consumer Goods (5%) 5. Media and Communications (5%) |

| Top 5 Teams | 1. Engineering (38%) 2. Operations (22%) 3. Product (12%) 4. IT (9%) 5. Data (7%) |

| Top 5 Roles | 1. Mid-senior level (28%) 2. Director/manager (23%) 3. Entry level (20%) 4. C-suite (17%) 5. VP (3%) |

| Company Size Breakdown | 1-99 employees (60%) 100-999 employees (26%) 1000+ employees (14%) |

Summary of State of AI 2023 Report

AI is a pivotal part of the tech landscape in 2023, with widespread but cautious adoption across various industries. In 2023, AI, particularly generative AI, has become a mainstream phenomenon, with large language models like OpenAI GPT-4 at the forefront.

Only 20% of companies represented in the survey have no plans to ship AI-powered features, marking GenAI the new status quo for consumers and products. Half of the companies with generative features are still in development and have not been released to customers as of August. This is a good reminder that Github Copilot took 3 years in product development before the GA release.

The survey reveals a nuanced perception of AI: it's seen as slightly overrated, yet indispensable for transforming industries and jobs. Despite concerns about AI replacing jobs, there's a strong belief in its potential to significantly change various industries within the next five years.

The adoption of AI in companies is still in early stages, with a focus on internal use cases. Key pain points include model accuracy and data security.

Tools like GitHub Copilot and Grammarly lead AI tool popularity, reflecting AI's increasing role in knowledge worker productivity tasks.

Top GenAI-Powered Tools

| AI Tool | Usage Percentage (%) |

|---|---|

| GitHub Copilot | 30.5 |

| Grammarly | 10.2 |

| Notion AI | 7.3 |

| Retool AI | 5.3 |

| Canva Magic | 4.6 |

| Copy.ai | 4.4 |

| Cursor | 3.0 |

| Fin by Intercom | 2.7 |

| Microsoft 365 Copilot | 2.2 |

The survey highlights a collective eagerness within the tech community to explore AI's possibilities, coupled with a call for greater focus on governance and ethics.

Popular models like OpenAI's GPT-4 are widely used, but a smaller, impactful segment of tech professionals is driving significant advancements through prompt engineering and evaluations, using specialized tools like Klu.ai.

Evaluating Prompt Performance

| Detail | Percentage (%) |

|---|---|

| Test Manually | 35.2 |

| Not Tracking Yet | 23.4 |

| Not Applicable | 17.4 |

| Built In-house Tooling | 11.0 |

| Use a Solution Like Klu | 6.5 |

| Evals | 6.3 |

This niche but influential group is pivotal in pushing the boundaries of AI's capabilities, indicating a future where these advanced techniques become mainstream in AI development and application.

Insights from the State of AI 2023

| Insight | Details |

|---|---|

| Large companies still more risk adverse | Larger companies tend to have more concerns about data security and are more likely to have strict data policies in place. Smaller companies may be more agile in adopting AI but might not prioritize or have the resources for stringent data security measures. |

| Leadership more bullish on opportunity | Upper management, including VPs and C-suite executives, view AI more favorably compared to those in more hands-on roles like software engineers and entry-level employees. This suggests a possible disconnect between the expectations of AI at a strategic level versus the practical implementation challenges at the operational level. The success of most AI projects at most businesses will regress to the mean. |

| New products disrupting Web 2.0 sites | The data shows a decline in the use of traditional resources like StackOverflow, with AI tools such as GitHub Copilot and ChatGPT being cited as primary reasons. This shift could indicate a transformation in how knowledge is accessed and utilized in the tech sector, moving from community-driven Q&A platforms to AI-assisted coding and problem-solving. |

| Secretive use prevalent in large, international companies | A significant number of respondents (34.4%) report using AI secretly at work. This hidden usage of AI could be a marker of a broader trend where employees explore and leverage AI capabilities beyond the official channels or policies of their organizations. This behavior might be driven by the desire to stay ahead of the curve, test new tools, or circumvent bureaucratic processes. At Klu, we see this happening most at international companies where employees may not possess expert fluency in the organization's primary language. |

| GPT-4 is the king | The majority of organizations are using OpenAI's models and not self-hosting. A smaller, yet significant group is engaging in more advanced practices like prompt engineering and using evaluation tools like Klu.ai. This minority could be key drivers of innovation in AI, experimenting with and pushing the boundaries of what these technologies can do. |

| Internal use, then external products | Over 50% more report the adoption of AI for internal versus external use cases. This could indicate that companies are more comfortable experimenting with AI internally before deploying AI-driven solutions in customer-facing scenarios, likely due to concerns over reliability, user experience, or regulatory issues. |

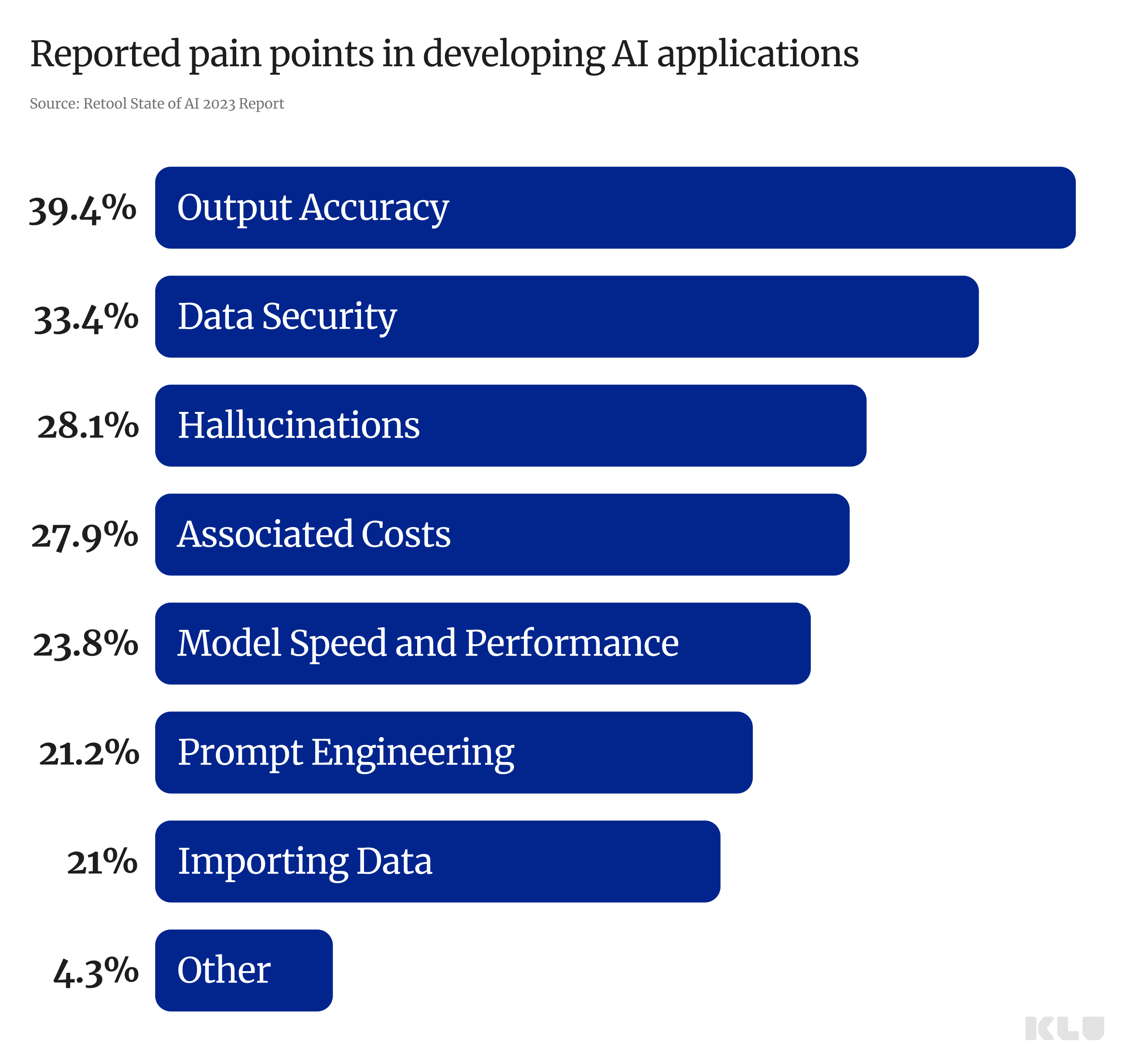

Pain Points in Developing AI Applications (State of AI 2023)

In this section, we dive into the challenges faced by professionals in developing AI applications, as reported in the State of AI 2023 survey. We're unsurprised to see these issues given these are the pain points we solve for with Klu.ai, however, it's beneficial to understand that these are the potential issues one might encounter when developing features powered by LLMs.

Model Output Accuracy — 39.4%

The accuracy of the model's output is the most significant concern, indicating a strong need for reliable predictions and decisions.

Data Security — 33.4%

Ensuring the security of data used in AI systems is the second most common challenge, highlighting the importance of data protection measures.

Hallucinations — 28.1%

This refers to AI models generating false or misleading information, which is a considerable challenge for developers.

Associated Costs — 27.9%

The costs associated with developing and maintaining AI systems are a major concern, suggesting a barrier to entry or expansion for some organizations.

Model Speed and Performance — 23.8%

The efficiency and speed of AI models are critical, especially as applications become more complex and data-intensive.

Prompt Engineering — 21.2%

Crafting effective prompts for AI models is a significant concern, implying a need for better understanding and tools to interact with AI.

Importing Your Data to Work with LLMs — 21%

Integrating personal or organizational data with large language models poses a significant challenge, indicating potential issues with compatibility or data handling.

Other — 4.3%

A small percentage of concerns fall outside the specified categories, which could include a wide range of less common or unique challenges.

Most Popular AI Platforms

| Dev Tool | Usage Percentage (%) |

|---|---|

| Hugging Face | 25.8 |

| AWS Bedrock | 11.5 |

| Weights & Biases | 7.5 |

| Together.ai | 6.4 |

Most Popular Open Source AI Dev Frameworks

| Framework | Usage Percentage (%) |

|---|---|

| LangChain | 18.4 |

| LlamaIndex | 7.7 |

| Haystack | 5.1 |

| Unstructured | 3.8 |